What a Proper Software Architecture Audit Actually Looks Like

Every few months we get the same call. Sometimes it's a CTO who inherited a platform she didn't build. Sometimes it's a private-equity partner doing pre-acquisition technical due diligence. Sometimes it's a founder who just got handed a six-figure quote for a "full rebuild" and wants a sanity check before signing.

The question is almost always the same: how healthy is this system, really — and what should we actually do about it?

That question deserves more than a gut feeling or a vendor's sales deck. It deserves a proper, independent software architecture audit. Here's what one actually looks like when it's done well — and what separates a genuinely useful audit from an expensive box-ticking exercise.

Why teams commission an architecture audit

The specific trigger varies, but it almost always comes down to one of five moments:

- Pre-acquisition or investment due diligence. You're about to buy a company, or invest in one, and the product is the asset. You need to know whether the codebase underneath the demo is a foundation or a liability.

- Inherited systems. A new CTO, a new engineering VP, or a new owner has taken over a platform they didn't design. They need a map of the terrain before they can lead confidently.

- Before a major rebuild. Someone has proposed a "ground-up rewrite" or a large modernization programme. Is that actually the right call, or would targeted refactoring get you 80% of the value for 20% of the cost?

- Legacy modernization & cloud migration planning. The system works, but it's tied to on-premise hardware, deprecated frameworks, or an operational model that's holding the business back. You need to understand what it would take to move.

- Regulatory or security review. A compliance audit, a failed pen test, or an enterprise customer's security questionnaire has surfaced questions you can't answer yet.

In all five cases, the common requirement is the same: an independent, evidence-based assessment that the people who built the system can't provide about themselves, and the people who want to sell you the next system shouldn't.

What a proper audit actually covers

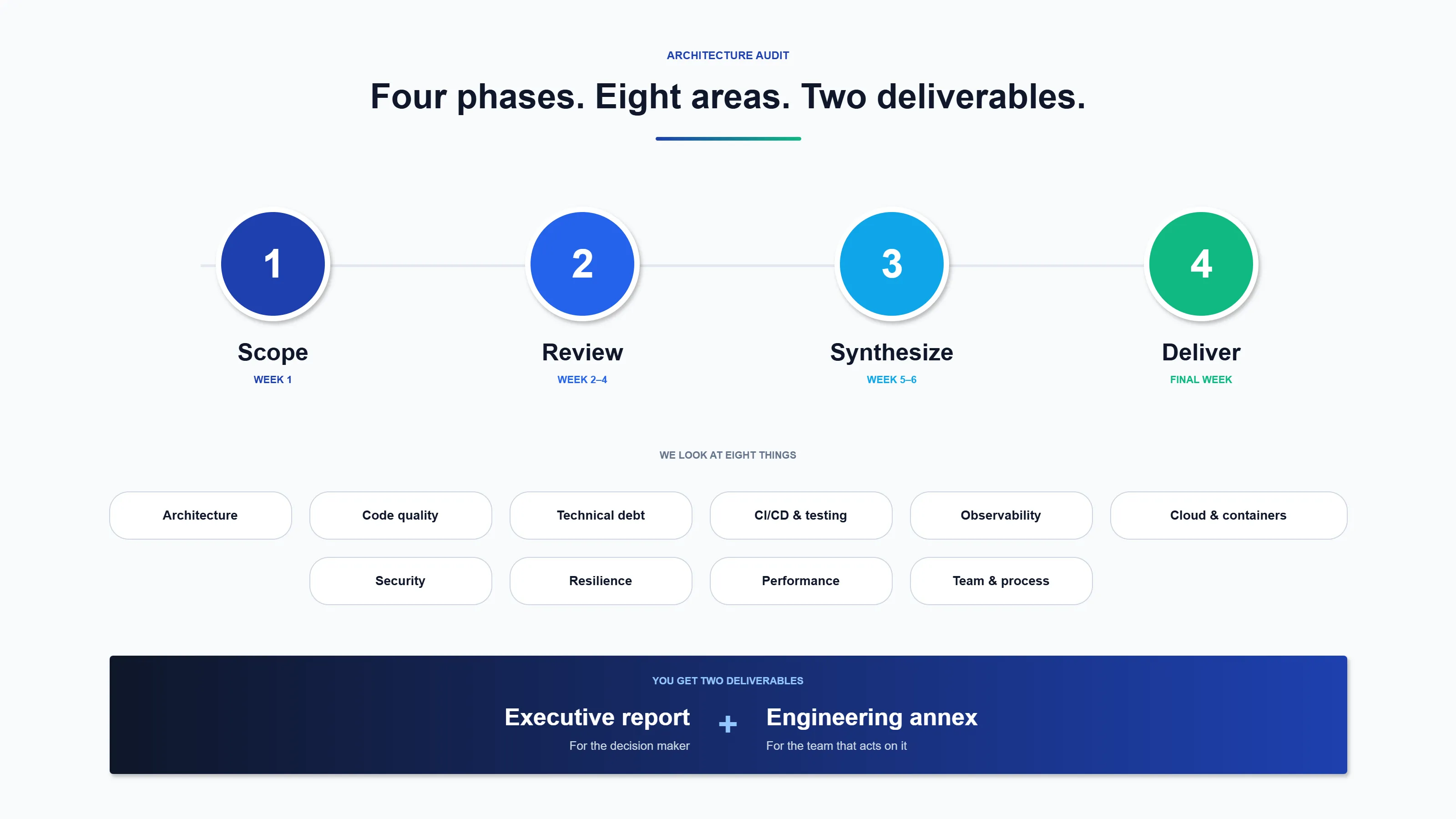

A real architecture audit is not a 20-minute code-review-with-a-price-tag. Done well, it's a structured forensic exercise across seven or eight distinct areas, each producing its own findings.

1. Architecture & system design

How is the system decomposed? Are the boundaries between components real, or have they eroded into a distributed monolith with hidden coupling? How do data and events flow? Is the design fit for the scale it's actually operating at — not the scale the original slide deck imagined?

The goal here is a clear, honest diagram of what the system is today, not what someone hopes it is.

2. Codebase & component quality

We read the code. A lot of it. We look for consistency, clarity, appropriate abstractions, test coverage, and the telltale signs of code that was maintained versus code that was merely kept alive. Static analysis helps, but the judgement calls — "is this pattern deliberate or accidental?" — still require a senior engineer with their hands on the source.

3. Technical debt & legacy constraints

Every system has debt. The question is whether it's the kind you're consciously paying interest on, or the kind that's quietly accruing until something breaks. We identify deprecated frameworks, end-of-life runtimes, outdated design patterns, and the places where a short-term workaround has become load-bearing.

Crucially, we quantify it. "Significant technical debt" is not a finding. "Upgrading off version X will take roughly six engineer-weeks and unblocks Y and Z" is.

4. Engineering practices: CI/CD, testing, observability

The code is only half the picture. How does it get to production? We audit build pipelines, deployment workflows, automated test coverage, feature-flagging, rollback mechanisms, and observability — logging, metrics, tracing, alerting. The maturity of the delivery pipeline is usually a more honest signal of engineering health than the code itself.

5. Cloud & container readiness

Even if the system isn't cloud-native today, we assess how ready it is to become so:

- Is the architecture cloud-portable, or is it wedded to specific infrastructure?

- Is containerization used, and used well? (Docker, Kubernetes, ECS, AKS, GKE, or equivalents.)

- Is infrastructure managed as code — Terraform, Pulumi, Bicep, CloudFormation — or is it clicked together in a console?

- Are scalability and reliability patterns — autoscaling, circuit breakers, graceful degradation — actually present, or just aspirational?

For many audits, this section is the one that most directly drives the modernization roadmap.

6. Security & compliance posture

We look at authentication, authorization, secret management, dependency hygiene, patching discipline, threat modelling (or its absence), and the audit trail. Regulated industries get additional scrutiny here — data residency, access logging, encryption at rest and in transit, and whatever compliance regime actually applies.

This is not a substitute for a formal penetration test, but it reliably surfaces the class of issues that lead to failed audits and customer-facing incidents.

7. Operational performance & resilience

How does the system behave under load? Where are the bottlenecks? What's the blast radius when component X fails? We review real telemetry where it's available, and synthetic evidence where it isn't. We pay particular attention to the things you only learn on a bad Tuesday afternoon — backup strategies, disaster recovery plans, and whether anyone has actually tested them.

8. Team, process & third-party hygiene

Architecture isn't just technology. We assess engineering capacity, documentation practices, knowledge concentration ("what happens if three specific people quit?"), governance structures, and the tooling ecosystem. We also review third-party libraries and services for licence compliance and supply-chain risk.

What you actually get at the end

A proper audit produces two deliverables, not one:

1. An executive report. Plain English, risk-based, prioritized. Written for the person who has to make the go/no-go call, not for the engineer who's going to fix the problems. This is the document that gets forwarded to boards, investors, and buyers.

2. A detailed engineering annex. The evidence, the code references, the specific findings, the remediation estimates. Written for the team that's going to act on it.

Both should be honest. A good audit is not a document of reassurance; it's a document of decision support. If everything looks fine, the report should say so clearly and move on. If there are real risks, they should be named, prioritized, and costed — not softened into corporate vagueness.

What separates a good audit from an expensive one

In our experience, three things make the difference:

- Independence. The auditor must have no incentive to recommend the rebuild, the migration, or the tool they happen to sell. A good audit sometimes concludes that the right answer is "do less than you were about to."

- Seniority of the auditor. This work requires someone who has personally designed, built, and operated systems at comparable complexity. Pattern-matching against experience is how you distinguish a genuine architectural flaw from a stylistic choice you'd have made differently.

- Specificity. Every finding should be falsifiable and actionable. "Improve testing" is not a recommendation. "Lift branch coverage on the payments module from 41% to 75%, estimated at ~3 engineer-weeks" is.

When to commission one

If you're inside any of the following windows, an audit will almost certainly save more than it costs:

- You're 30–90 days out from an acquisition, investment, or major contract that depends on the platform's health.

- You've just inherited a platform — as a new CTO, new owner, or new investor.

- You're being asked to approve a significant build or rebuild and the proposal is written by the people who'd get the contract.

- You're modernizing toward cloud or containers and need an honest baseline before committing to a target architecture.

- You have a critical system you haven't independently reviewed in the last 18 months.

How we run architecture audits at Innovi Pro

We run audits as fixed-fee, fixed-timeline engagements — typically three to twelve weeks depending on the scope and number of products involved. We work from your source code, your infrastructure, and interviews with your team. We deliver the executive report and the engineering annex, walk stakeholders through the findings in person or over call, and stay available for follow-up questions afterwards.

We work across industries — wherever there is a complex software system whose health is material to a business decision. The underlying discipline travels well: a serious audit of an insurance platform, a logistics system, a healthcare product, or an industrial SaaS looks remarkably similar under the hood.

If you're sitting inside one of the windows above, get in touch. A 30-minute call is usually enough to decide whether an audit is the right next step — and if it isn't, we'll tell you that too.