The 8-Dimension Architecture Audit: Find Your Weakest Link

Every engineering leader knows their architecture has problems. Almost none can tell you, in rank order, which problem to fix first. That is the gap this post closes.

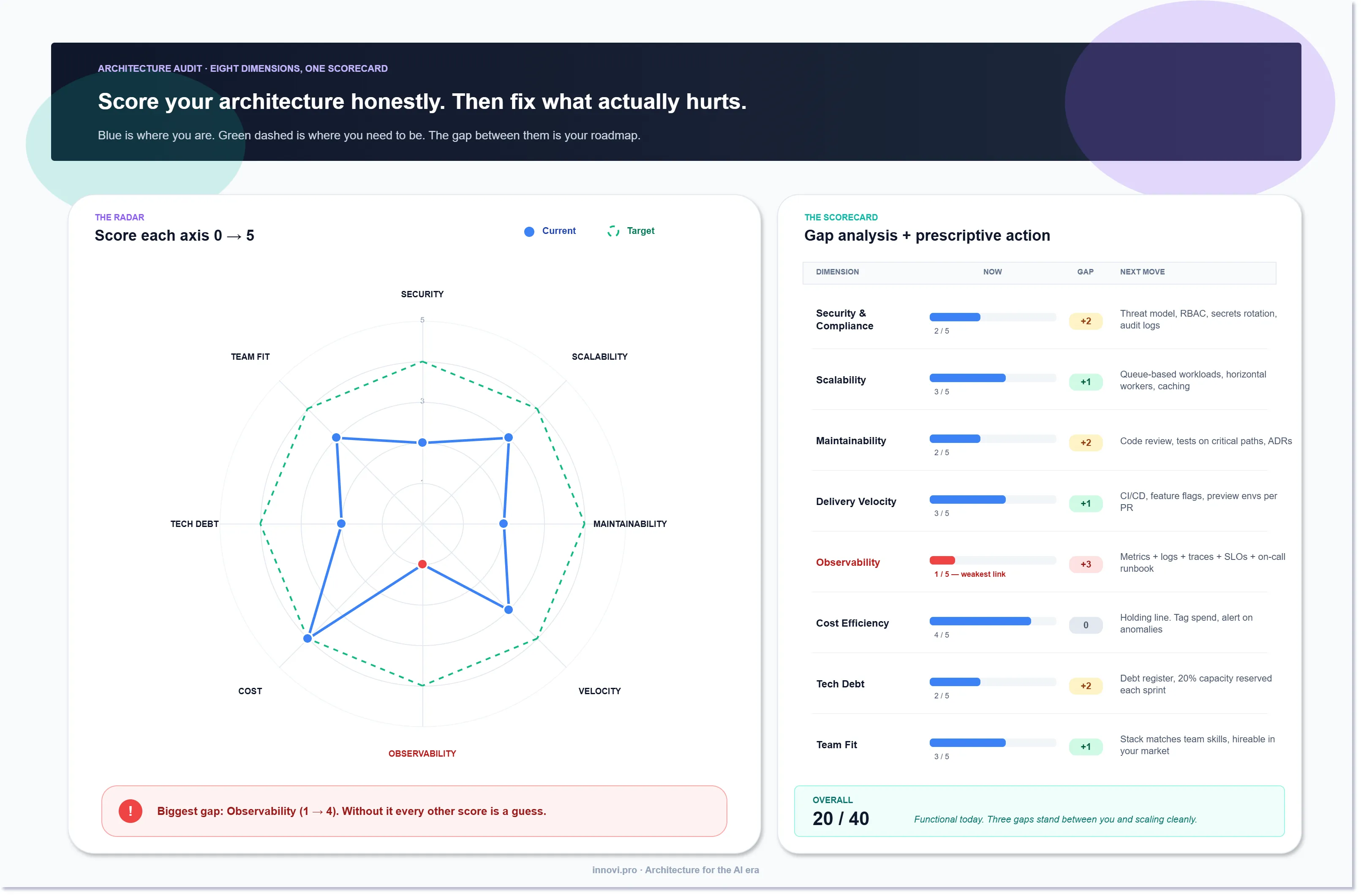

An architecture audit does not have to be a three-month McKinsey engagement. Ours takes a team two hours with the right template and returns a scorecard that survives contact with reality. Eight dimensions, scored 0-5, with a target score, a gap, and a prescribed next move for each one. Your lowest score is the weakest link — and until you fix it, every other score is a guess.

This article is the scorecard we use. Steal it.

Why eight dimensions

You can audit architecture with two dimensions or twenty. Both are wrong. Two misses too much. Twenty creates so much debate that nothing gets prioritized.

Eight is the sweet spot because it covers every failure mode we have actually seen in production without leaving room for ceremony. If your architecture is strong on all eight, your platform is probably fine. If it is weak on one, you know exactly where to put the next quarter of engineering time.

The eight:

- Security & Compliance — controls, threat model, auth, regulatory posture.

- Scalability — how the system behaves under 10x load.

- Maintainability — how fast a new engineer can ship a change safely.

- Delivery Velocity — time from "we should do X" to X being in prod.

- Observability — can you see what the system is doing in real time?

- Cost Efficiency — cost per unit of user value, and the trajectory of that ratio.

- Tech Debt — known shortcuts you are paying interest on.

- Team Fit — does the stack match the team's skills and the hiring market?

Not on the list: "code quality" (it's inside maintainability), "resilience" (split between scalability and observability), "documentation" (symptom of maintainability). Keep the list at eight and the conversation stays useful.

The scoring rubric

Each dimension scores 0 to 5 against a fixed rubric. You do not need to agree with our rubric — you need to pick one and use it consistently.

- 0 — Broken. Actively hurting the business right now.

- 1 — Neglected. No active work. Likely to break under any new pressure.

- 2 — Functional. Works in the happy path. Fails under stress.

- 3 — Competent. Works most of the time, with known gaps.

- 4 — Strong. Handles current and near-future load. Monitored.

- 5 — Best-in-class. A competitive advantage.

Most architectures end up scoring 1-3 on most dimensions, with one or two at 4+. That is normal. A system scoring 5s across the board is either brand new or being described by marketing.

Walking each dimension

Here is what to look at per dimension, in the order we assess them. Treat these as the minimum probe; add your own organization-specific checks.

1. Security & Compliance

- Is there a written threat model? When was it last updated?

- Is auth centralized (SSO, RBAC) or ad-hoc?

- Are secrets rotated, or pasted into env files?

- For regulated domains (HIPAA, PCI, SOC 2): do you have current evidence, not just intent?

- When was the last pen test and did the findings close?

If score is below 3: threat model, audit trail, secrets rotation, and an identity-aware proxy are the fastest lifts.

2. Scalability

- What is the current peak load, and what happens at 10x it?

- Is work queue-based or synchronous?

- Can you add compute without re-architecting?

- Is the database a bottleneck you can name?

If score is below 3: queues and horizontal workers. Most "we can't scale" problems are "we synchronously do everything in one process" problems.

3. Maintainability

- How long until a new engineer can ship their first PR safely?

- Do tests exist on critical paths?

- Are architectural decisions documented somewhere other than someone's head?

- Can you make a small change without reading the whole system?

If score is below 3: mandatory code review, tests on the five most business-critical paths, and ADRs (architecture decision records) for anything non-trivial.

4. Delivery Velocity

- What is the time from commit to production?

- How often does a release cause an incident?

- Do you have CI/CD or "CI/CD" (manual button, scripts on someone's laptop)?

- Feature flags, or direct-to-prod toggles?

If score is below 3: real CI/CD, preview environments per PR, and feature flags so you can ship and roll back fast.

5. Observability

This is where most organizations score lowest. It is also where the lowest score hurts most, because without observability every other score is an educated guess.

- Do you have metrics, logs, and traces — all three?

- Are SLOs defined and alerted on?

- Can you answer "what was the p95 latency of endpoint X yesterday" in under five minutes?

- Is there an on-call rotation with a real runbook?

If score is below 3: drop whatever is second priority and fix this first. We mean it.

6. Cost Efficiency

- What is your cost per active user / per transaction / per customer?

- Is the trajectory improving or worsening month over month?

- Do you have cost alerts tied to anomalies?

- What percentage of spend is on idle resources?

If score is below 3: tag everything, set a cost anomaly alert, right-size the top three spend lines. Don't start with "let's migrate clouds" — start with "let's stop paying for things nobody uses."

7. Tech Debt

- Is there a written debt register, or just a shared sense that "things are messy"?

- Do sprint plans reserve capacity for debt (15-20% is a healthy floor)?

- Which debt is active (compounding, hurting velocity today) vs. dormant (not pretty, not hurting)?

- Is anyone tracking the top 5 items?

If score is below 3: a one-page debt register and a standing 20% per sprint. You will not pay it all down. You will pay down the worst of it, which is what matters.

8. Team Fit

- Does your stack match your team's actual skills or your lead's preferences from five years ago?

- Can you hire for this stack in your market?

- Are engineers productive or fighting the stack?

- Is any critical system owned by exactly one person?

If score is below 3: re-evaluate the stack choices honestly. "We use Scala because our staff engineer loves it" is not a team-fit argument when you are trying to hire three more engineers and the market has none.

The gap column matters more than the score

The score tells you where you are. The gap — target score minus current score — tells you what to do.

Pick your target score per dimension based on the business context, not the ideal. A three-person startup does not need Security at 5. A healthcare platform absolutely does. Set targets that match your risk tolerance and growth stage.

Then sort by gap size. Biggest gap is your top priority this quarter, not biggest-dimension or most-fashionable-topic. If your observability gap is +3 and your scalability gap is +1, observability wins — even if everyone on the team wants to talk about scalability.

The weakest link rule

In any system of dimensions, the overall user experience is capped by the weakest one. A platform with world-class scalability and broken observability is a platform that will fail spectacularly and you will not know why for hours.

This is why the audit is worth doing as a radar chart rather than a bar chart. A bar chart encourages you to optimize the tallest bar because it looks rewarding. A radar chart forces you to look at the shape of the polygon — and an unbalanced polygon is an unstable platform.

Balanced 3s across eight dimensions beat one 5 and three 1s. Every single time.

How to run the audit

Ninety minutes with three people is enough for a first pass.

- Schedule 90 minutes with your tech lead, a senior engineer, and a product-side stakeholder.

- Score live. Each person silently scores each dimension 0-5. Reveal together. Discuss gaps of 2+ points in the scoring. The conversation is where the value is.

- Set targets. For each dimension, pick a target for 12 months out. Be realistic, not aspirational.

- Sort by gap. Rank dimensions by

target - current, descending. - Pick top three. Assign an owner and a first move for each. Put them in your next quarter's plan.

That's it. No Confluence wiki required, though you'll want to write the scorecard down somewhere you can look at it in six months and see whether the numbers moved.

Re-score every quarter

A one-shot audit is useful. A quarterly re-score is transformative. Two reasons:

- Scores drift. Things you fixed can regress. Things you ignored can get worse. The dimensions you were not thinking about last quarter are often where problems appear.

- Targets should evolve. As the business grows, what counts as "good" changes. A score of 3 on scalability was fine at 1000 users and is a crisis at 100,000.

Track the eight scores over time and the trend tells you more than any single snapshot. Flat scores = organization running in place. Rising scores = maturity. Falling scores = something important is breaking and nobody has named it yet.

What we do with it

When Innovi Pro runs this audit with a client, the first session is the scorecard. The second session is a 90-day plan against the top three gaps. The third session, 90 days later, is the re-score.

Most of our long engagements start this way. Not because the audit is the product — it isn't — but because without it, every recommendation is just opinion. With it, the roadmap argues for itself.

The scorecard is free. You don't need us to run it. You do need to be honest on the scoring, which is the hardest part, and the easiest to skip.

Run it next week. Find the weakest link. Fix that one thing. Your architecture will already be better than 80% of the platforms in your category, because 80% of them haven't done this exercise at all.